This may not always be desirable since data from different sources may have different access requirements, different retention policies, or different ingest processing requirements. Part 2.2.When driving data into Elasticsearch from Filebeat, the default behaviour is for all data to be sent into the same destination index regardless of the source of the data. REMOTE SERVER CONFIG FOR LOG SHIPING (FILEBEAT) INSTALL AND CONFIG ELASTICSEARCH, LOGSTASH, KIBANA Open web address in browser: Picture 2.1.1. Sudo firewall-cmd -permanent -zone=public -add-service=http Log in (ssh) to remote host web-testsoft-net (192.168.33.95) and install nginx: Now you have learned how to send any logs and data to elasticsearch by filebeat.Īdditional information: how to install nginx webserver View syslog log lines from “/var/log/secure” View syslog log lines from “/var/log/secure” Picture (2.2.4). You will see your log lines from syslog file “secure” Picture (2.2.3, 2.2.4). Next open “Discover” page, type “syslog” in KQL string and press “enter”.

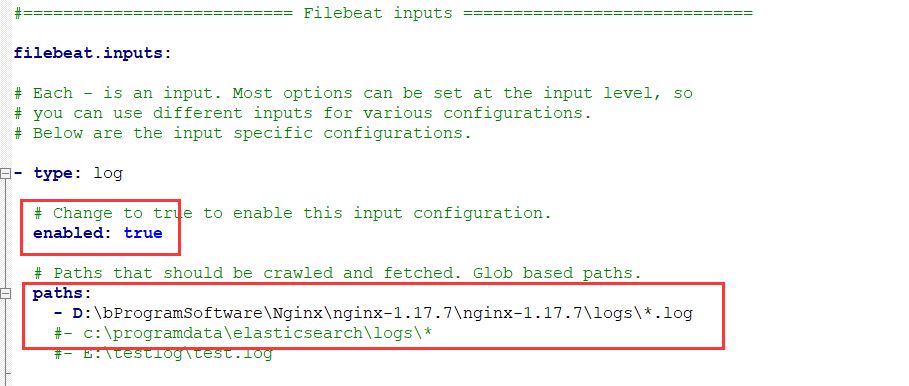

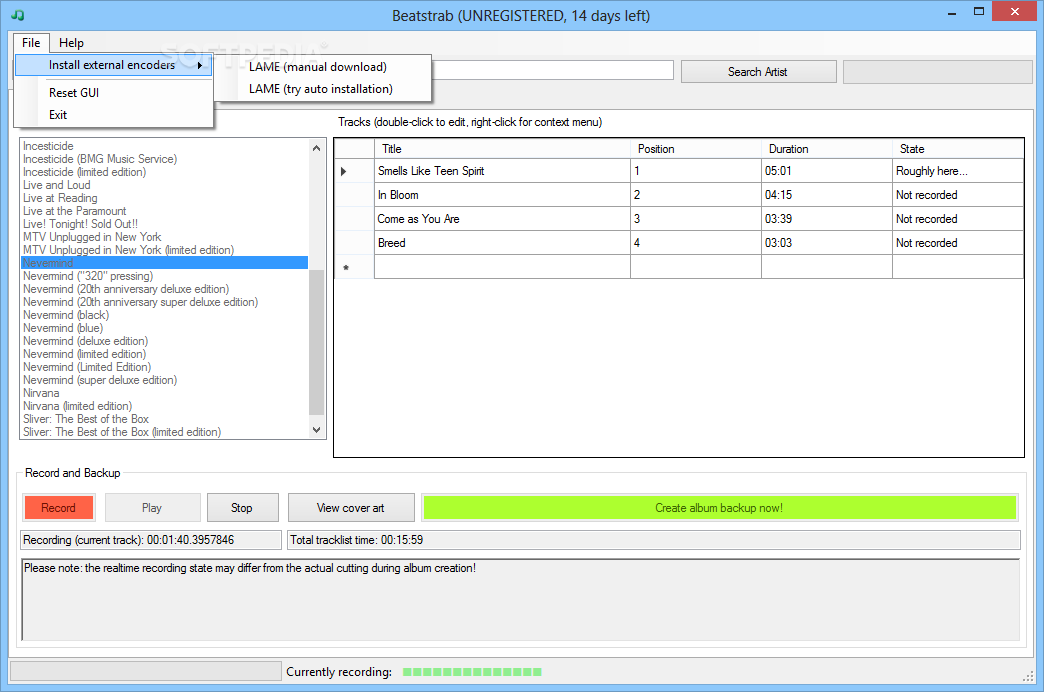

After relogin, open kibana url in browser: “” and enter to elasticsearch. Now exit ssh session and relogin to web serever by ssh again. Filebeat setup for reading “/var/log/secure” log In the following articles, I will tell how to use tags in logstash parsing. For your log files, you can set any value in the “tag” field. Tags: – in this example, I set tag “syslog”, because the file “secure” has a standard linux format – syslog. # Paths that should be crawled and fetched. # Change to true to enable this input configuration. Open file “filebeat.yml” for edit and add lines (Picture 2.2.2): Now you can see standard linux security log looks like (Picture 2.2.1), so let’s send this data to elasticsearch. I’ll show example on the log file “secure”, which is located in the standard linux directory “/var/log/”. You can do this in configuration file “/etc/filebeat/filebeat.yml”. To ship any log files, you need to specify where filebeat should read the logs from. Filebeat setup for custom file read and log shipping. When open kibana and enter web server ip address 192.168.33.95 in KQL string: Picture 2.1.3 Elasticsearch remote log view form 192.168.33.95.Įxcellent, you have configured web server to ship logs to remote elasticseach. Open web server page address in browser: and refresh the page several times. You can view all available modules in filebeat:Īfter all config changes restart filebeat: Sudo service filebeat status Picture 2.1.2. Enable nginx, auditd module in filebeat to read nginx and linux audit logs: # Authentication credentials - either API key or username/password. # Protocol - either `http` (default) or `https`. Disable output to local elasticsearch (section Elasticsearch Output) and set remote elasticsearch ip 192.168.33.90 and logstash port 5044 in the section Logstash Output. Then run the command:Įdit default filebeat config.

And add elasticsearch repository: create file and copy the text into it: Log in (ssh) to the web server with nginx (195.168.33.95). The easiest way to transfer logs to remote host is using the built-in “filebeat” modules. Filebeat install and config build-in modules for remote log shipping.Īn example of setting up filebeat is shown on nginx web server logs. Filebeat setup for custom file read and log shipping Filebeat install and config build-in modules for remote log shipping

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed